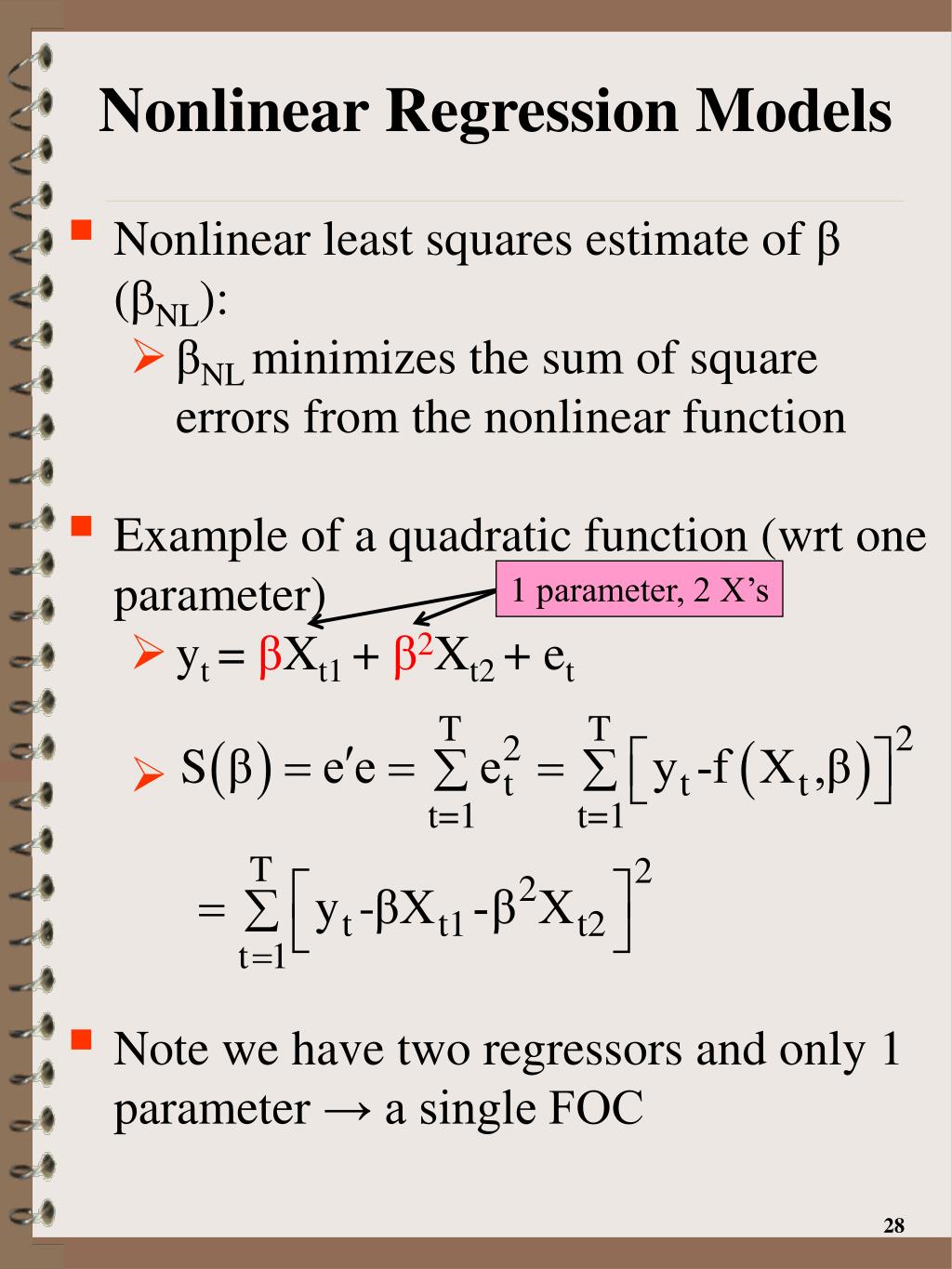

Let’s import all the required packages from scipy.optimize import least_squares import pandas as pd from patsy import dmatrices import numpy as np import statsmodels.api as sm import as smf import as st import matplotlib.pyplot as plt temp: Temperature, normalized to 39C atemp: Real feel, normalized to 50C hum: Humidity, normalized to 100 windspeed: Wind speed, normalized to 67 4=Heavy Rain + Ice Pellets + Thunderstorm + Mist, Snow + Fog. 3=Light Snow, Light Rain + Thunderstorm + Scattered clouds, Light Rain + Scattered clouds. 2=Mist + Cloudy, Mist + Broken clouds, Mist + Few clouds, Mist. Season: the prevailing weather season yr: the prevailing year: 0=2011, 1=2012 mnth: the prevailing month: 1 thru 12 holiday: Whether the measurement was taken on a holiday (yes=1, no=0) weekday: day of the week (0 thru 6) workingday: Whether the measurement was taken on a working day (yes=1, no=0) weathersit: The weather situation on the day: 1=Clear, Few clouds, Partly cloudy, Partly cloudy. The regression variables matrix X will contain the following explanatory variables: Total_user_count: count of total bicycle renters We’ll build a regression model in which the dependent variable ( y) is: Rental bike usage counts (Source: UCI Machine Learning Repository) (Image by Author) In the following model, the regression coefficients β_1 and β_2 are powers of two and three and thereby not linear. Now let’s look at three examples of the sorts of nonlinear models which can be trained using NLS. The y matrix is of size (m x 1) and the coefficients matrix is of size (m x 1) (or 1 x m in its transpose form) it has m data rows and each row contains n regression variables. We will assume that the regression matrix X is of size (m x n) i.e.

For example, y_obs_i is a scaler containing the ith observed value of the y_obs vector which is of size (m x 1). Variables in plain style are scalers and those in bold style represent their vector or matrix equivalents. Y_obs is the vector of observed values of the dependent variable y. β_(hat) is the vector of fitted coefficients. The ‘hat’ symbol (^) will be used for values that are generated by the process of fitting the regression model on data. We’ll follow these representational conventions: You do not need to read PART 1 to understand PART 2.

Deltagraph nonlinear regression how to#

PART 2: Tutorial on how to build and train an NLS regression model using Python and SciPy.

You will enjoy it if you like math and/or are curious about how Nonlinear Least Squares Regression works. PART 1: The concepts and theory underlying the NLS regression model. Models for such data sets are nonlinear in their coefficients. Nonlinear Least Squares (NLS) is an optimization technique that can be used to build regression models for data sets that contain nonlinear features. And a tutorial on NLS Regression in Python and SciPy